Robert Youssef @rryssf_

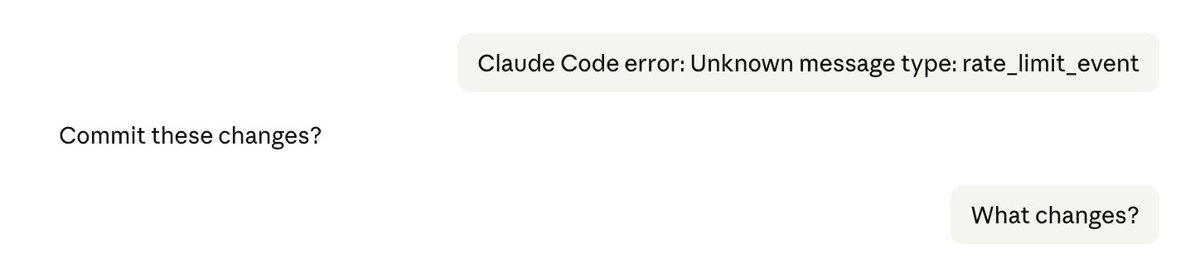

this is the most underreported problem in agentic coding right now it's not a bug. it's an architecture problem. when you split a single conversation into async subagents that each write to a shared history, you lose attribution. the system can't reliably track who said what to whom. and "who said what" is the entire foundation of instruction-following. a model that confuses its own output for a user command isn't hallucinating. it's operating on a corrupted conversational state. different failure mode. arguably worse, because it looks like compliance. this will keep happening as agents get more autonomous. more subagents, more async updates, more opportunities for the history to become incoherent. and the failure mode isn't "agent gets confused and stops." it's "agent gets confused and acts." that's the part people should be paying attention to.

Sort: