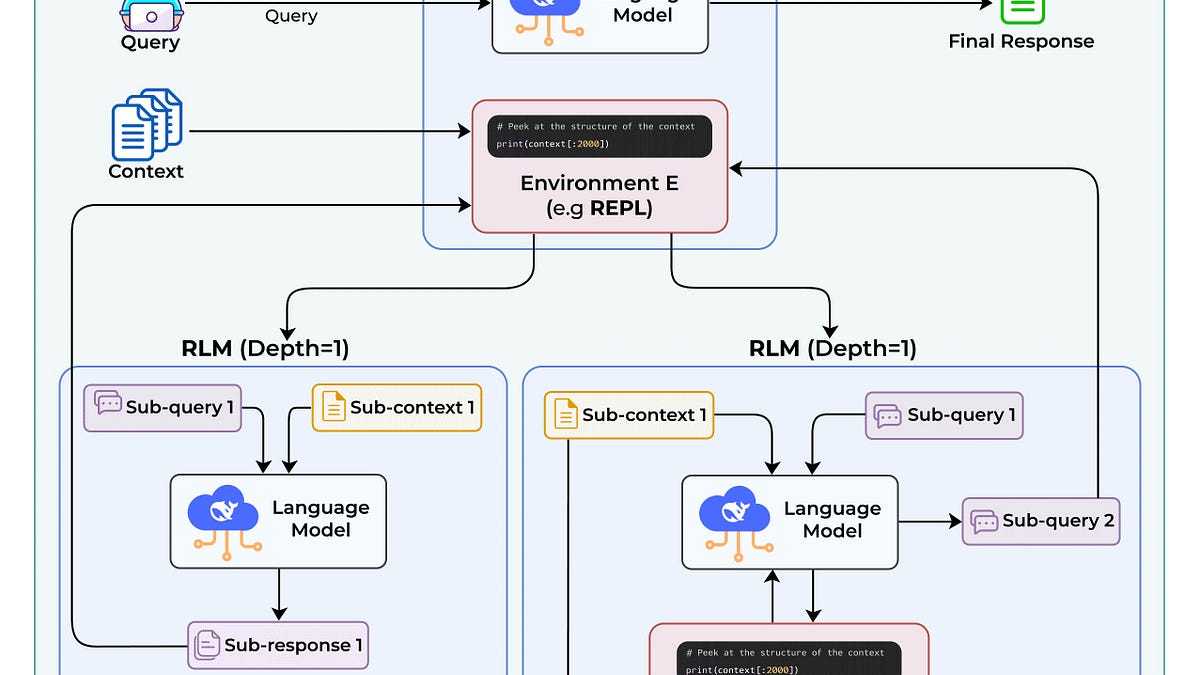

Recursive Language Models (RLMs) solve the "context rot" problem where LLMs degrade on long conversations. Instead of processing all context at once, RLMs store context separately and use tools to peek, grep, partition, and recursively process smaller chunks. MIT researchers showed RLM with GPT-5-mini outperformed GPT-5 on

Sort: