The evolution of artificial intelligence and machine learning was rapid and unanticipated. This article dives into six ways you can manage and optimize your models for deployment and inference. Each method will be accompanied by examples/tutorials on how to apply this to your own problem.

Table of contents

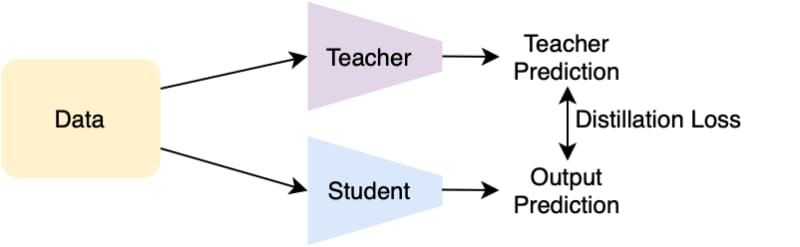

Memory management with knowledge distillationSpeed up inference using model quantization and layer fusionUsing ONNX libraryDetermine the mode of deploymentModel pruning in optimizing modelsOnline deep learning for model optimizationEnd noteSort: