Robert Youssef @rryssf_

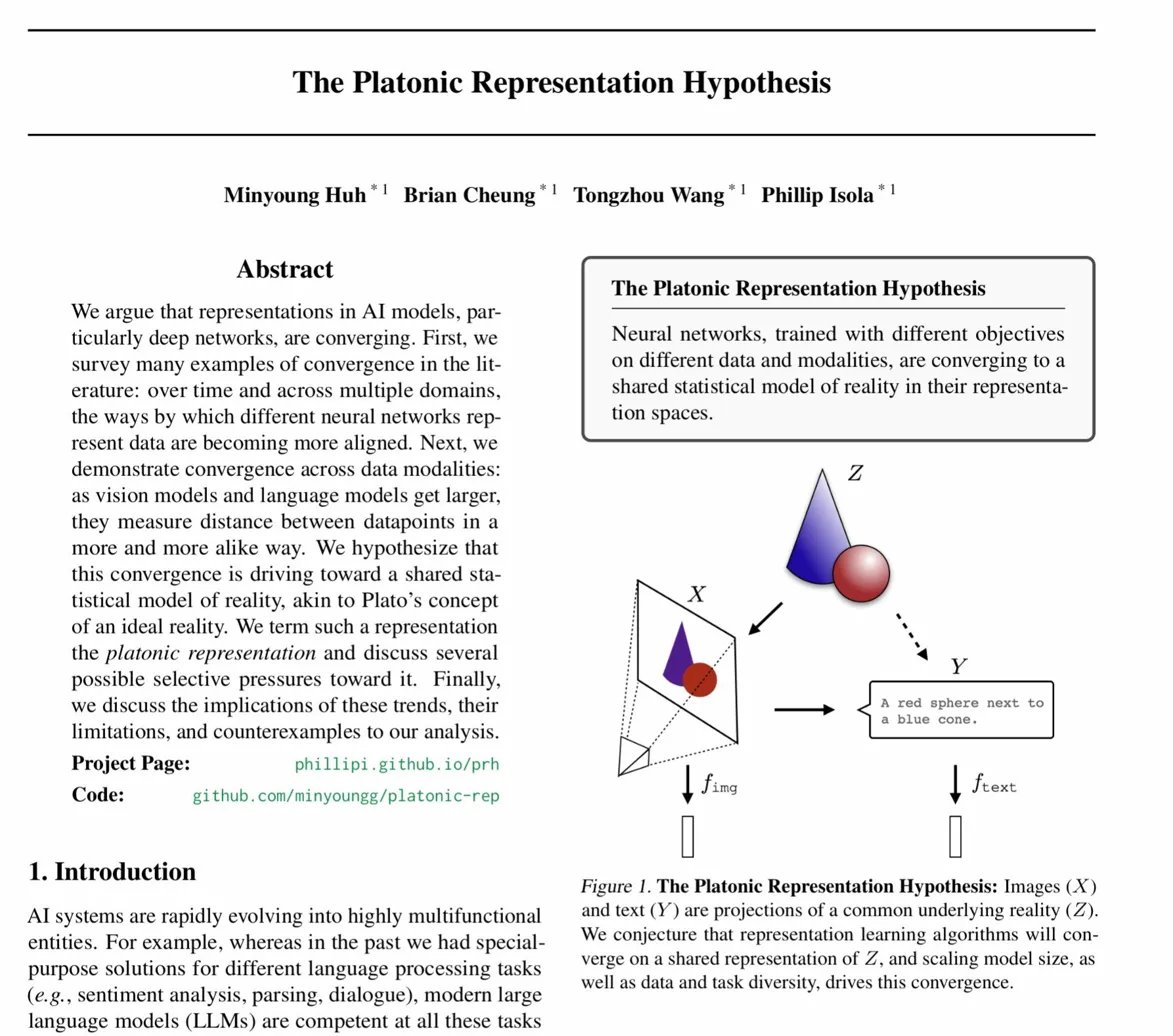

MIT researchers proposed something called the "platonic representation hypothesis" and it's one of the most fascinating ideas in ai right now 🤯 the core claim: as neural networks get bigger and train on more data, their internal representations are converging. vision models, language models, different architectures, different training objectives. they're all slowly approximating the same underlying model of reality. think of it like this. imagine a hundred cartographers mapping the same territory using completely different tools. one uses satellites, another uses sonar, another walks on foot. at first the maps look nothing alike. but as the tools improve and the coverage increases, the maps start agreeing. not because the cartographers coordinated. because the territory is real. Huh, Cheung, Wang, and Isola at MIT tested this across 78 vision models with different architectures, objectives, and training data. the result: as models scale, their representation kernels (basically how they measure similarity between data points) converge toward the same structure. the practical implications are wild if this holds. you could translate between models instead of treating each as a sealed black box. interpretability work on one system could transfer to another. alignment could happen at the representation level, not just by policing outputs. but here's where it gets honest. the best alignment score they measured (using DINOv2) was 0.16. perfect alignment would be 1.0. so we're seeing a trend toward convergence, not convergence itself. there's a massive gap between "representations are becoming more similar" and "representations are the same." also: different modalities genuinely capture different information. the text "apple" doesn't tell you if it's red or green. an image does. a symphony's emotional texture doesn't fully translate to text. there are real information asymmetries that might set hard limits on how far convergence can go. and the elephant in the room: sociological bias. we're all training on similar internet data, using similar architectures (transformers), optimizing similar objectives. is convergence evidence of a deep truth about reality, or evidence that the ai field has a monoculture problem? if every model is a transformer trained on web text, of course they'll agree. that's not philosophy. that's homogeneity. the authors are refreshingly upfront about this. they frame it as a position paper, not a proof. they discuss counterexamples. they acknowledge that domains like robotics show no convergence at all yet. the philosophical question underneath is genuinely compelling though. if sufficiently capable learners trained on different data independently discover the same representational structure, that suggests something real about the territory being mapped. maybe meaning isn't just a human convention. maybe there are natural coordinates in reality that strong enough learners keep rediscovering. or maybe we're just reading patterns into a field that hasn't diversified its methods enough to test the hypothesis properly. either way, this paper reframes how we think about what models are actually doing when they learn. they're not just memorizing patterns. they might be recovering structure. the honest take: convergence is real. the platonic interpretation is beautiful. but 0.16 out of 1.0 means we're writing philosophy on top of a trend line, not a theorem.

Sort: