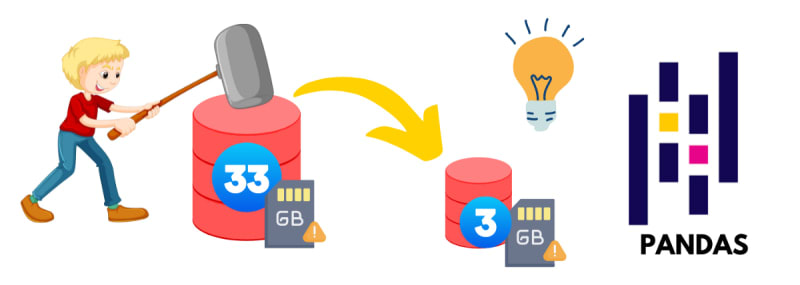

A dataset may contain thousands to millions of rows. We can load the smaller dataset quickly, but when the dataset gets bigger, our system may not be able to run the large memory files. So I thought to share how I converted the 33GB data file into a 3 GB file using Pandas.

Table of contents

DatasetImporting the LibrariesChunkingOptimizing and concatenatingConverting data frame to file formatReading the filememory_optimiztion_amex_datasetSort: