OpenAI has been developing GPT (Generative Pre-Train) since 2018. GPT 1 was trained with BooksCorpus dataset (5GB), whose main focus is language understanding. On Valentine’s Day 2019, GPT 2 was released with the slogan “too dangerous to release” The training cost is $43k.

Table of contents

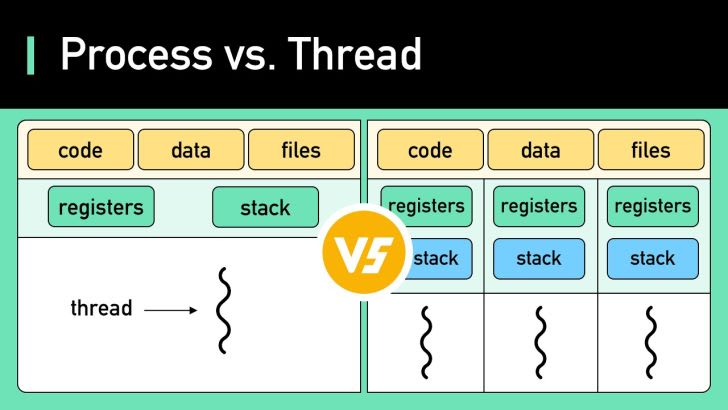

What is the difference between Process and Thread?ChatGPT historyWhat is a DDoS (Distributed Denial-of-Service) Attack?Fallacies of distributed computingFeatured job openingsSort: