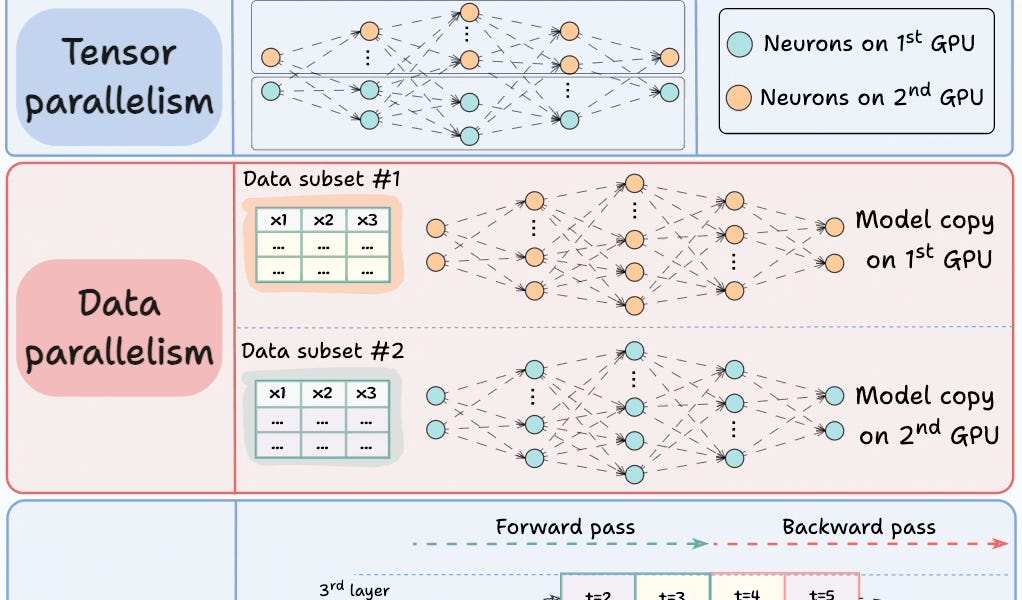

This post discusses four strategies for multi-GPU training: model parallelism, tensor parallelism, data parallelism, and pipeline parallelism.

Table of contents

4 Strategies for Multi-GPU Training#1) Model parallelism#2) Tensor parallelism#3) Data parallelism#4) Pipeline parallelismAre you overwhelmed with the amount of information in ML/DS?Sort: