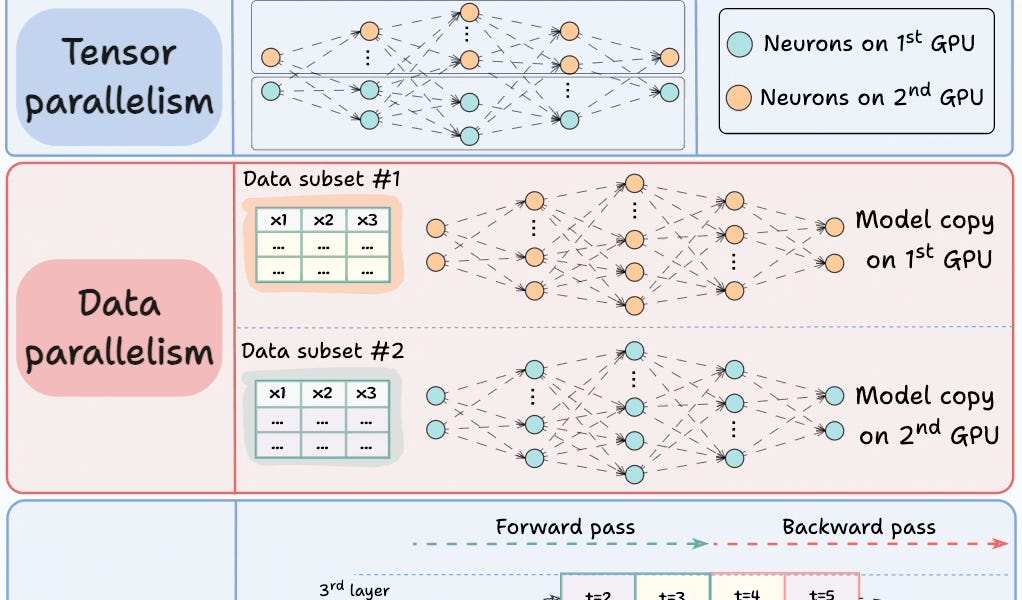

Training deep learning models on multiple GPUs can significantly enhance performance. Four common strategies include model parallelism, tensor parallelism, data parallelism, and pipeline parallelism. Model parallelism involves different parts of the model being placed on different GPUs. Tensor parallelism distributes tensor operations across multiple devices. Data parallelism replicates the model across GPUs and processes smaller data batches simultaneously. Pipeline parallelism, a combination of data and model parallelism, loads new data batches on one GPU while another GPU processes the data, optimizing GPU utilization.

Table of contents

Integrate 100,000+ APIs into AI Agents in 3 clicks!4 Strategies for Multi-GPU TrainingP.S. For those wanting to develop “Industry ML” expertise:Sort: