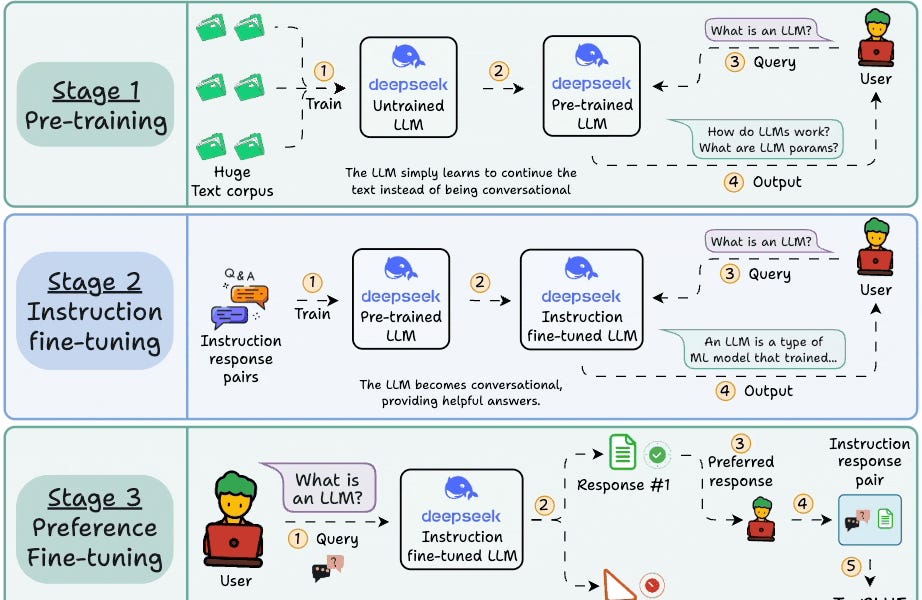

Training large language models from scratch involves four key stages: pre-training on massive text corpora to learn language basics, instruction fine-tuning to make models conversational and follow commands, preference fine-tuning using human feedback (RLHF) to align with human preferences, and reasoning fine-tuning for mathematical and logical tasks using correctness as a reward signal. Each stage builds upon the previous one to create increasingly capable and aligned AI systems.

Table of contents

Apply LLMs to any Audio File in 5 lines of code4 stages of training LLMs from scratch0️⃣ Randomly initialized LLM1️⃣ Pre-training2️⃣ Instruction fine-tuning3️⃣ Preference fine-tuning (PFT)4️⃣ Reasoning fine-tuningP.S. For those wanting to develop “Industry ML” expertise:2 Comments

Sort: