16 Techniques to Optimize Neural Network Training

This title could be clearer and more informative.Try out Clickbait Shieldfor free (5 uses left this month).

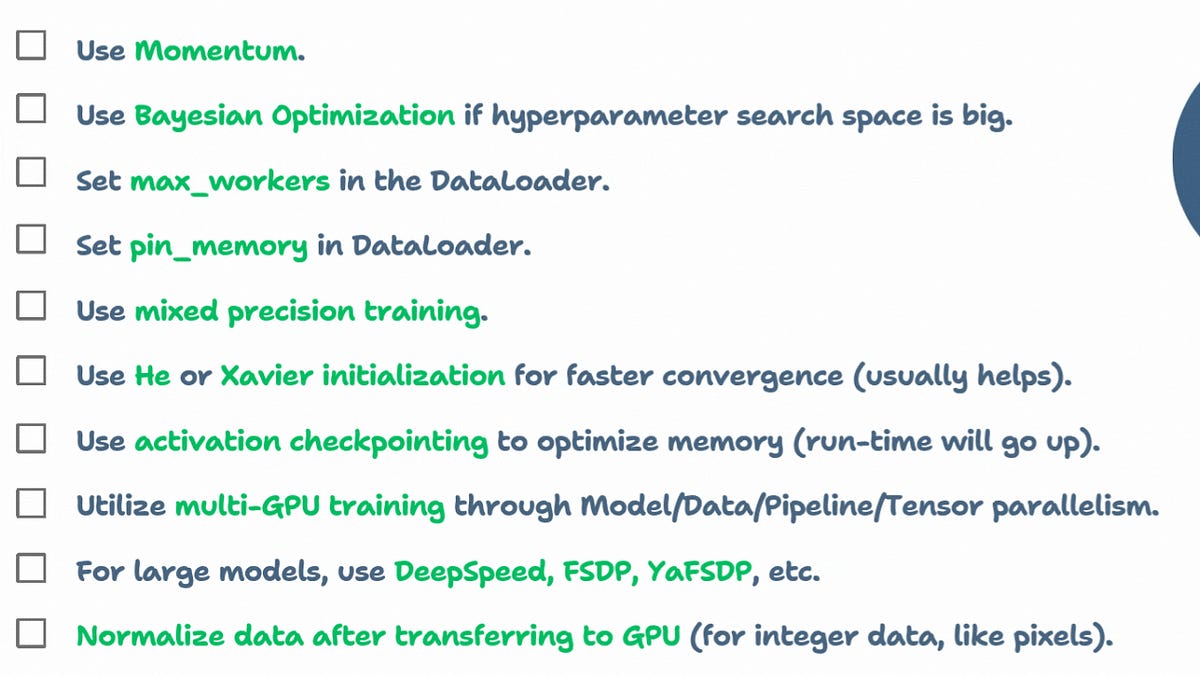

A curated list of 16 techniques to speed up and optimize neural network training. Covers basics like using AdamW, GPUs, and large batch sizes, then goes deeper into Bayesian hyperparameter optimization, mixed precision training (float16/float32), He/Xavier weight initialization, multi-GPU parallelism strategies (model/data/pipeline/tensor), DeepSpeed and FSDP for large models, activation checkpointing for memory reduction, GPU-side data normalization, gradient accumulation, direct GPU tensor creation in PyTorch, and DataLoader tuning with max_workers and pin_memory for CPU-GPU overlap.

Table of contents

Training LLM Agents using RL without writing any custom reward functions16 techniques to optimize neural network trainingP.S. For those wanting to develop “Industry ML” expertise:Sort: